XDP Internals: Hooks, Driver Integration, and Zero-Copy Networking

Understanding the Problem

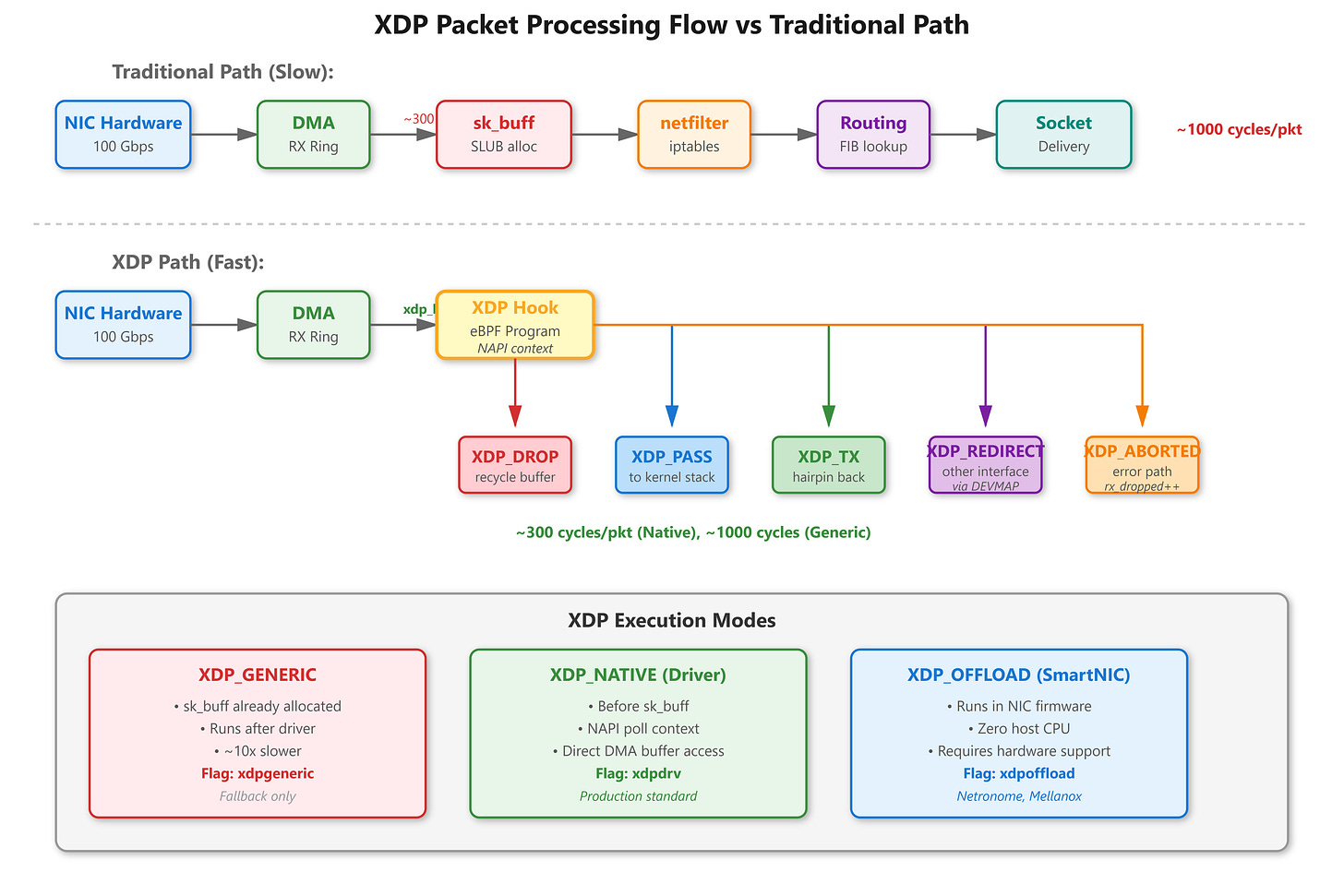

When Cloudflare needs to drop 300 million packets per second during a DDoS attack, iptables isn’t an option. The kernel’s network stack—with its sk_buff allocations, netfilter hooks, and routing decisions—becomes the bottleneck. XDP (eXpress Data Path) solves this by running eBPF programs directly in the network driver, before the kernel even allocates sk_buff structures. This is packet processing at the earliest possible point, and getting it right requires understanding driver integration, memory management, and zero-copy mechanics.

The sk_buff Problem

Every packet entering Linux normally triggers sk_buff allocation: 200-300 bytes from the SLUB allocator, cache misses, metadata initialization. At 100 Gbps with 64-byte packets (148 million packets/sec), this overhead destroys performance. The system spends more time allocating and freeing sk_buff structures than processing packets. Context switches spike, sys time dominates, and eventually the receive ring overflows—packets get dropped silently by the NIC.